Chaos Engineering. How High-Performing Teams Find Weak Points Before Users Do

%20(1).avif)

Staff Writer

.avif)

Ever had a “normal” deploy turn into a surprise outage in your microservices, and suddenly the whole day becomes incident response? We've been there too, and chaos engineering is one of the most practical ways we've found to turn those surprises into planned learning.

Key Principles of Chaos Engineering

When chaos engineering works, it feels less like “breaking stuff” and more like a disciplined reliability practice inside DevOps. We start with a steady state (what “healthy” looks like). We write a hypothesis and then run automated experiments that inject faults in production environments or production-like environments.

From there, observability does the heavy lifting. Metrics, logs, and traces tell us if customers stayed safe and where the system got brittle.

- Hypothesis first: write what should happen, and what must not happen.

- Measure customer impact: availability, latency injection outcomes, error rates, and key user journeys.

- Small blast radius: one service, one region, one dependency, one change at a time.

- Abort rules: alarms and “stop” controls that end the experiment fast.

- Fix forward: every experiment should produce a concrete engineering change, not just a slide deck.

Embracing failure as a learning opportunity

We treat failure like data. The goal is to expose small, controlled faults so we can build real system resilience before customers feel anything.

This mindset is what made early tools like Netflix's Chaos Monkey influential. It forced teams to design for instance loss as a normal condition, not a rare exception.

- Start with “safe-to-fail”: kill one pod, inject latency on one endpoint, or throttle one dependency.

- Practice reversibility: if you can't stop it quickly, it's not ready for production environment testing.

- Train the response path: the experiment should exercise runbooks, alerts, and on-call decision-making.

- Make it routine: a small weekly drill beats a once-a-year big event.

Creating hypotheses and measurable objectives

We form a tight hypothesis, then we test it, we measure what matters.

We don't start with tools. We start with a question like, “If a node disappears, will checkout still succeed within our SLO?”

Google's SRE workbook example shows the math clearly. If you handle 1,000,000 requests in four weeks and your availability SLO is 99.9%, you can only “spend” 1,000 bad requests in that window before you miss the objective.

- Write the steady state: pick a few SLIs (availability, latency, error rate) that reflect real user value.

- Set a stop rule: define the alarm that ends the experiment if risk rises.

- Define pass or fail up front: avoid “we'll interpret it later” outcomes.

- Record results like an incident: timeline, impact, fix, and next action.

Testing in production-like environments

Testing in production-like environments means your experiment matches real dependencies, real traffic shapes, and real operational constraints without gambling with your highest-risk customers.

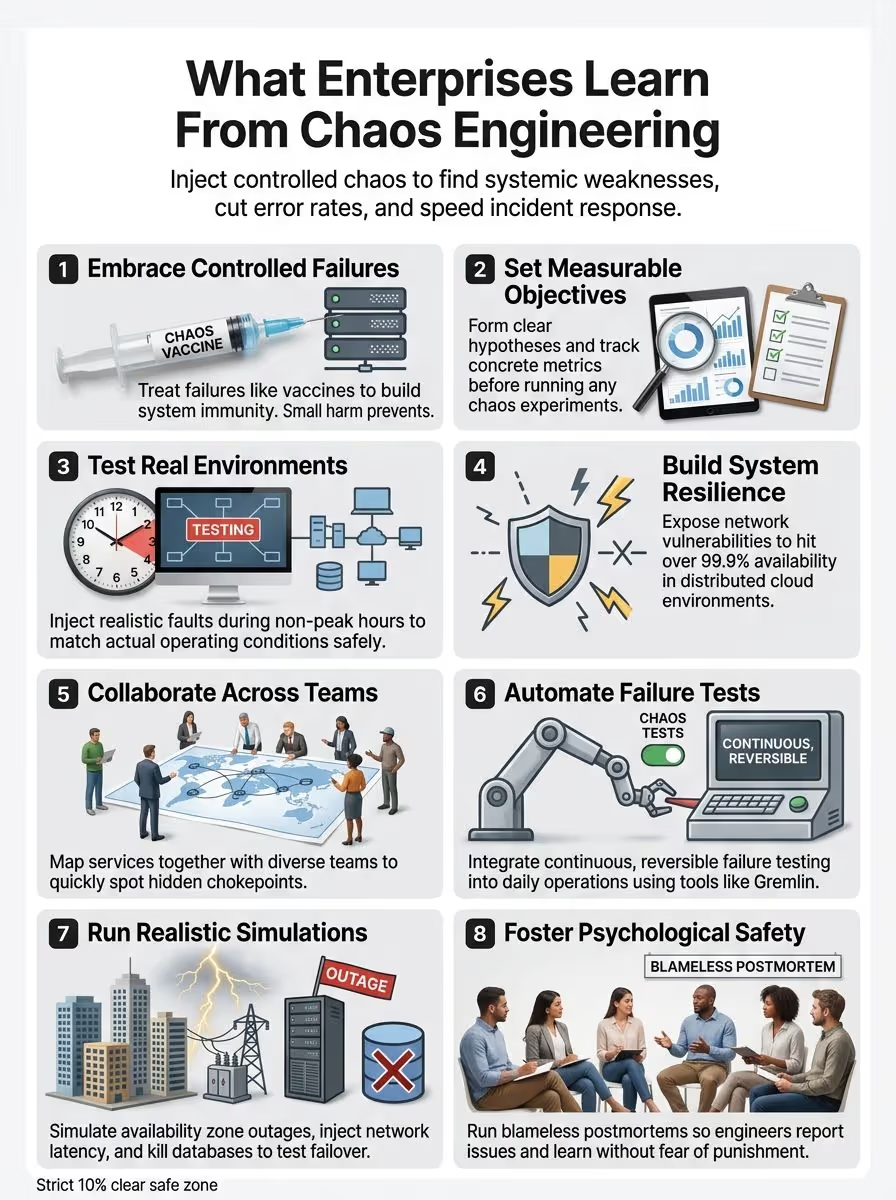

Lessons Enterprises Are Learning from Chaos Engineering

Enterprises don't adopt chaos engineering because it sounds interesting. They adopt it because distributed systems fail in messy, real ways, and hope does not run a production environment.

When we run fault injection and latency injection with solid observability, we learn which parts of our architecture are resilient and which parts only look resilient on paper.

- Microservices multiply failure modes: retries, timeouts, caches, and queues can amplify small issues.

- Tooling is not the hard part: the hard part is choosing the right experiments and acting on results.

- Reliability is cross-team: network, platform, app, and data teams all own pieces of the outcome.

Building resilience in distributed systems

Resilience is not one feature. It's a set of behaviors your system can prove under stress.

We like to map each experiment to a resilience mechanism so teams can see exactly what's being validated.

- Pick one user journey: checkout, login, search, or data ingest.

- List dependencies: DNS, gateways, queues, object storage, databases, third parties.

- Prove the fallback works: cached reads, queued writes, or a “read-only” mode.

Understanding system vulnerabilities

Vulnerabilities in production environments are often “boring” details: a missing timeout, a bad readiness check, or an alert that never fires. A startup probe can keep liveness and readiness from flapping during initialization, and that can prevent noisy restarts during load generation or deployments.

- Probe what matters: readiness should represent “safe to receive traffic,” not “process is alive.”

- Kill the happy-path assumption: inject slow DNS, packet loss, or a partial dependency outage.

- Watch for secondary failures: memory growth, connection pool exhaustion, and queue backlog.

- Document the failure mode: turn each discovery into a runbook entry with a clear trigger and a fix.

Improving incident response times

Chaos engineering helps incident response because it rehearses the same decisions made during real incidents but with guardrails. We recommend explicit roles like incident commander, scribe, customer liaison, and subject matter experts, which keeps calls focused when stress climbs.

- Run a 30-minute drill: pick one scenario, one system, one outcome.

- Time-box diagnosis: assign an owner for each hypothesis and set short check-in times.

- Close the loop: every drill should create an engineering ticket and an ops update.

Effective Practices for Chaos Engineering

We keep chaos engineering effective by making it routine, measurable, and safe for customers. That means small experiments, clear rollback, and fast follow-up.

- Pick the service: choose one microservice with clear SLIs and owners.

- Define the blast radius: limit to one namespace, one tenant group, or one zone.

- Set stop conditions: alarms, error budget burn limits, or a manual “halt all” rule.

- Run the experiment: inject one fault type, then observe.

- Write the follow-up: fix, retest, and update runbooks.

Cross-functional collaboration

Chaos engineering is a team sport. Platform, app, security, and product perspectives all reduce blind spots.

One thing we've found useful is turning “tribal knowledge” into a shared dependency map that anyone can validate.

- Start with customer flows: what users do, and what revenue paths depend on it.

- Map dependencies: APIs, queues, caches, databases, and identity services.

- Agree on guardrails: what's allowed during experiments, and what is off-limits.

- Write a shared runbook: if only one person can restore the service, you don't have resilience.

Structured reflection and follow-up

A chaos experiment without follow-up is just stress. The value shows up when you turn results into fixes, and then verify the fixes under the same failure testing scenario. Learn from incidents without blame and make systems more reliable because of it.

- Write a short timeline: what happened, what we saw, what we did, what worked.

- Name one root cause and one contributing factor: avoid “everything broke” summaries.

- Create owners and deadlines: tie each fix to a person and a date.

- Update the runbook: add the signal, the decision, and the action steps.

- Re-run the experiment: confirm the system behavior improved, not just the narrative.

Automated failure testing

Automation matters because resilience is not a one-time project. Systems change every week through CI/CD, dependency upgrades, and configuration drift.

We like tools that support repeatable experiments, scoped targeting, and fast stop controls.

Role of Chaos Engineering in Enterprise Operations

In enterprise ops, chaos engineering becomes a reliability habit, not a special event.

It plugs into how site reliability engineers and DevOps teams already work: dashboards, alerts, on-call, and iterative delivery.

- Before releases: verify failure modes that tend to break during deployments.

- After changes: run a small regression experiment to catch new weak points.

- During operations: rehearse the response and validate observability.

Enhancing system reliability

Reliability improves when teams can prove behaviors like failover, throttling, and degraded mode under controlled stress.

We usually start with a single service and one experiment type, then expand as confidence grows.

Reducing downtime

Downtime rarely comes from one big failure. It usually comes from a chain reaction that nobody has rehearsed.

That's why we favor experiments that reproduce the most common chains, then break them with better defaults.

- Zone impairment drills: verify traffic shifts and stateful failover behavior.

- Dependency slowdown tests: validate timeouts, circuit breakers, and caching.

- Capacity pressure: run CPU and memory stress and confirm autoscaling and backpressure.

- Runbook validation: prove the “restore” steps work in minutes, not hours.

Improving customer trust

Customers trust you when outages are rare and when your response is calm and clear when they do happen.

Chaos engineering supports that by reducing repeated incidents and making your response predictable.

- Communicate early: acknowledge impact and share what users should do next.

- Share a clear timeline: what happened, what was affected, and what is restored.

- Prevent repeats: publish what changed, and re-test with the same experiment.

Fostering a Culture of Resilience

Tools don't create resilience, teams do.

When people feel safe to report risk, run experiments, and write honest postmortems, systems improve faster and on-call gets calmer.

- Make experiments normal: small and frequent builds confidence.

- Reward learning: celebrate “we found a weakness” the same way you celebrate “it passed.”

- Remove blame: focus on conditions and fixes, not personal fault.

Psychological safety for teams

Psychological safety is not a “soft” topic in software engineering. It directly affects how quickly engineers report risk and how honestly teams learn from incidents.

Google's Project Aristotle, shared publicly in 2019, highlighted psychological safety as a key dynamic for effective teams.

- Run blameless postmortems: write down what happened and what changed, without finger-pointing.

- Invite dissent: ask, “What could make this experiment unsafe?” before you run it.

- Protect on-call: reduce paging noise so people can think during real incidents.

- Practice the stop button: make “abort the experiment” a normal, respected action.

Encouraging experimentation and innovation

We get the best results when experiments are treated like code: versioned, reviewed, and repeatable across environments.

- Start with templates: pick common faults like pod kill, network delay, and CPU stress.

- Add guardrails early: permissions, namespaces, and stop rules.

- Automate one path: run a small automated experiment after key releases.

- Teach through pairing: a dedicated chaos “coach” accelerates adoption across teams.

Conclusion

We've learned to test distributed systems, not hope they hold. Chaos engineering makes failure visible, measurable, and fixable, especially in microservices where small issues can cascade.

When we keep experiments small, timed, and reversible, we protect customers while building real confidence in our production environment.

Other Articles

We build the engineering. You build the business.

If you are trying to figure out whether SWARECO is the right fit for what you are building, the best way to find out is to talk. Tell us what you have. We will be direct about what we can do and how we would approach it.

.jpg)

.jpg)

.jpg)